Share article

Print article

Podcasting has become one of our most intimate cultural forms. We often listen alone, through headphones, to voices that guide us through complex or deeply personal stories. Over time, we come to trust these voices not just for the information they convey, but for the sense that someone has listened, selected and shaped what we hear.

That relationship is unsettled byThe Epstein Files, a new AI-generated podcast series that promises to process millions ofEpstein-related documentsinto a coherent narrative. But when no one is clearly responsible for what we hear, the authority of the voice becomes harder to trust.

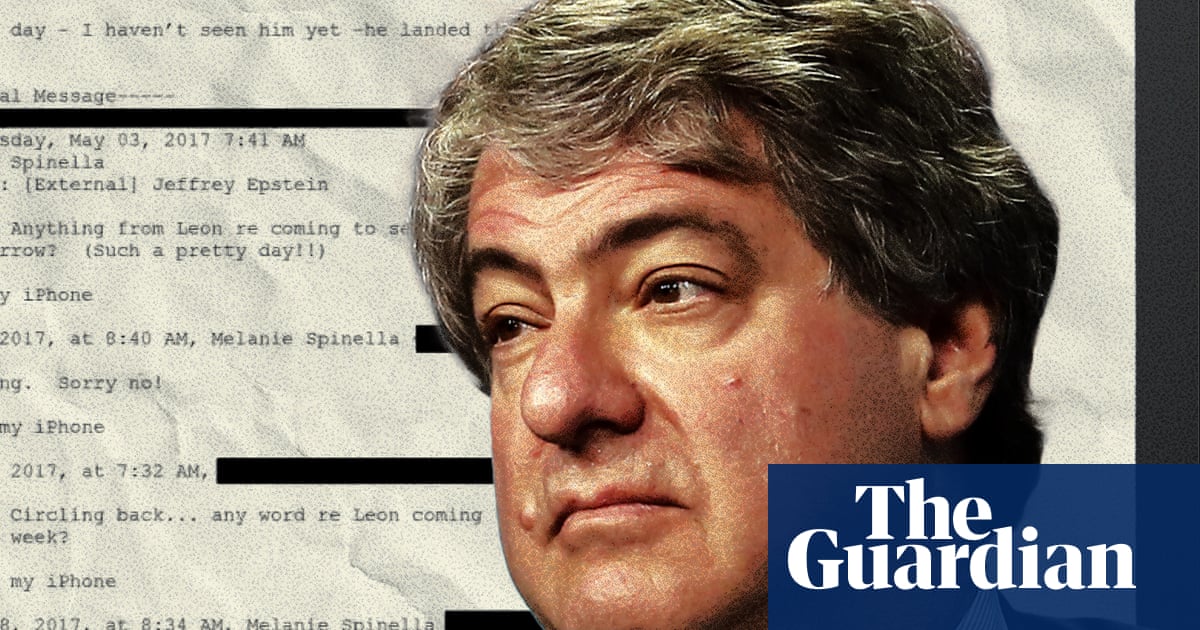

Created bydata entrepreneur Adam Levy, the series draws on more than three million documents linked to Jeffrey Epstein and presents them as a “forensic audit” in the form of a conversational podcast between two AI-generated hosts.

Launched in February 2026, it’s had more than two million downloads so far. It’s a daily, self-updating show built through an automated pipeline that ingests, cross references and scripts material using AI systems, operating at a speed that traditional newsrooms could only dream of.

At first listen, The Epstein Files works, sounding like a carefully crafted podcast. But despite the jokes, cross-talk, hesitations and filler words that mirror shows likeThis American Life,SerialorS-Town, there are no identifiable human speakers behind the voices. From research to publication, the process appears to be largely automated, in line with Levy’s intention to“strip the emotion”from the story.

The hosts also claim that the podcast acts as a filter, combining AI-assisted processing with “human analysis” to review the records rather than speculate. But this distinction is harder to verify when the processes behind selection, interpretation and emphasis remain largely invisible.

Emotion, judgement and interpretation are seen here as irritations or threats. However, systems that select, rank and narrate information do not become neutral simply because those decisions bypass direct human involvement.

The series presents itself as “the first AI native” investigative documentary. Yet it lacks many of the features we’ve come to expect. There are no interviews, no location recordings, and hardly any sonic cues to guide the listener. Instead, it relies almost entirely on simulated conversation.

The use of AI in podcasting is not simply a technical development. It disrupts the way shows are produced, structured and distributed. Rather than acting as a tool, these systems are beginning to reshape or obscure editorial processes that usually rely on human judgement.

The Epstein Files demonstrates how effectively AI can process vast quantities of material, producing a narrative that sounds coherent. But coherence is not the same as sense making, and pattern recognition is not interpretation. Deciding what matters, what is credible, and what should be left out remains a human task.

Automation does not remove judgement. Instead it relocates it, often in ways that are harder to see. Decisions are embedded in training data, system design and weighting mechanisms while appearing as neutral or unbiased outputs.

When information can be processed at scale, the question is no longer just what we know, but how we decide what counts as knowledge. Editorial standards don’t disappear, but they become harder to identify.

The human voice carries assumptions of authenticity. It signals presence, experience and connection. When we hear someone speak, we tend to assume a relationship between voice and responsibility. That assumption becomes more difficult to sustain when the voice is artificial yet sounds convincingly human.